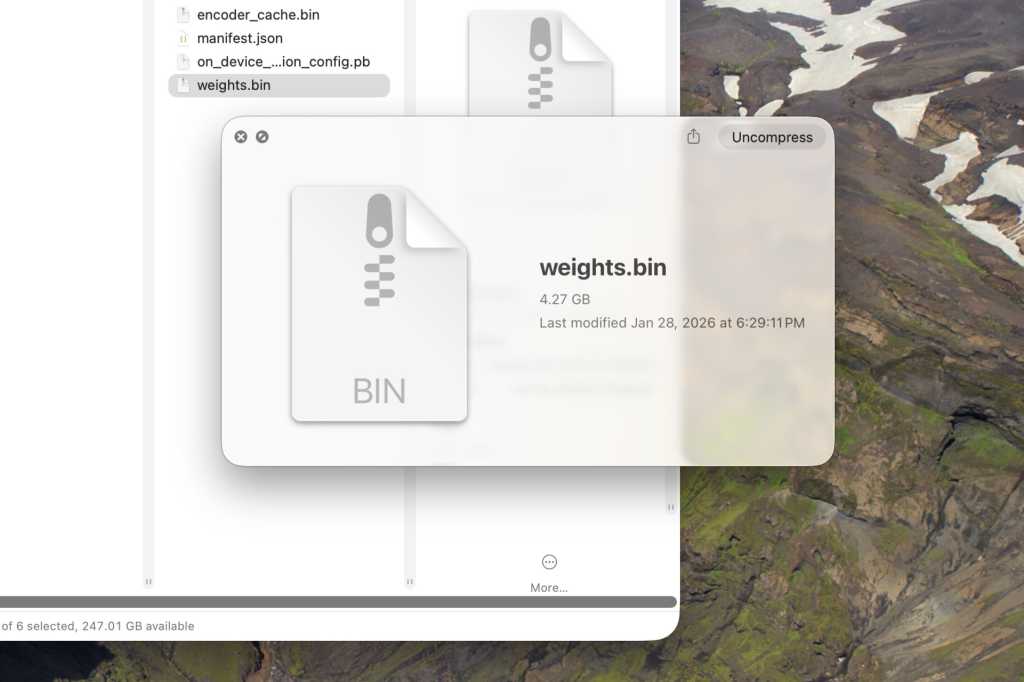

Imagine Chrome working away in the background, siloed from its main window. No prompts. No dialogs. While you’re reading the news, it’s busy unpacking a 4GB file named weights.bin. You didn’t ask for it. You’ll never be told about it. This is the new silent deployment strategy for Google’s on-device Gemini Nano AI model, and it’s landing on millions of machines right now.

Security researcher and lawyer Alexander “That Privacy Guy” Hanff has meticulously documented an architectural choice that redefines user agency: Google Chrome silently downloading a massive AI payload without consent, violating European privacy laws, and incurring a potential climate cost in the tens of thousands of tons of CO₂.

This isn’t a minor cache file, it’s a foundational part of the Gemini Nano model. According to Hanff’s controlled test, Chrome scans your hardware, declares your machine eligible, and over the course of 14 minutes and 28 seconds of idle browsing time, downloads the full package. The kicker? Delete the file, and Chrome simply reinstalls it on next launch. Unless you dig into chrome://flags or apply enterprise policies most users don’t have, your consent is permanently overridden.

The Mechanics of a Silent Takeover

The technical evidence is forensic-grade. On macOS, Hanff used the kernel’s .fseventsd, an immutable, OS-level file system event log, to capture the entire unconsented install sequence on a fresh Chrome profile with zero human interaction. Three concurrent subprocesses spawned, writing weights.bin, manifest.json, and ancillary files into a hidden OptGuideOnDeviceModel directory. Chrome’s own Local State JSON confirms the operation: a performance_class and vram_mb check to see if your PC is worthy of receiving this digital imposition.

Chrome’s internal feature flags reveal the strategic intent. The flag OnDeviceModelBackgroundDownload<OnDeviceModelBackgroundDownload triggers the download before the ShowOnDeviceAiSettings<OnDeviceModelBackgroundDownload flag makes the relevant settings UI visible to the user. The architecture is designed: first you get the payload, then you are allowed to know about the feature. This is a classic dark pattern, installing capability before any user-facing choice is presented.

The Deceptive AI Mode Pivot

The irony is almost cruel. A user who spots their disk space shrinking might also notice Chrome’s new “AI Mode” pill in the omnibox. Given a 4GB Gemini Nano file on their drive, they might reasonably assume this glossy new feature uses the local model for privacy and speed.

They’d be wrong. The “AI Mode” pill launches a Search Generative Experience that sends your queries straight to Google’s servers. The local model is used for buried features like tab organization or writing assistance in text fields. This creates a deceptive dynamic: the user bears the cost (storage, bandwidth) for a Google asset that doesn’t even power the most prominent AI feature they see. It’s a user-hostile bait-and-switch, a hollow promise of local processing used as a smokescreen for a mandatory cloud service. This is similar privacy vulnerability in browser sessions, where user expectations are systematically violated by platform design.

The Scale of the Infraction: 240 GWh and 60,000 Tons of CO₂

The privacy violation is matched by a staggering environmental one. When you scale a 4GB download across Chrome’s billions of users, the numbers become punishing. Hanff calculates using standard network energy intensity figures:

The energy required to transfer data is estimated at 0.06 kWh per GB. The grid emissions factor is conservatively set at 0.25 kg CO₂e per kWh.

Per Device Cost of One Push:

- Bandwidth: 4 GB

- Energy: 4 × 0.06 = 0.24 kWh

- CO₂: 0.24 × 0.25 = 0.06 kg CO₂e

Aggregate that across the web:

| Devices Receiving Push | Total Bytes | Total Energy | Total CO₂e Impact |

|---|---|---|---|

| 100 million (~3% of users) | 400 Petabytes | 24 GWh | 6,000 tonnes |

| 500 million (~15% of users) | 2 Exabytes | 120 GWh | 30,000 tonnes |

| 1 billion (~30% of users) | 4 Exabytes | 240 GWh | 60,000 tonnes |

That 60,000-tonne figure is the delivery-only cost for a single silent push to one-third of Chrome users. It doesn’t include the constant re-downloads for users who try to delete the file, future model updates (these weights are not static), or the embodied carbon of allocating NAND flash storage on billions of devices. Conservatively, that’s the annual carbon output of over 13,000 average EU passenger cars, spent before a single AI query is processed. This stands in stark contrast to the philosophy behind efficiency of smaller open-source AI models where size and deployment impact are primary considerations.

The Legal Reckoning: A Clear Breach of EU Law

Hanff, a lawyer specializing in digital privacy, argues this is not a gray area but a clear-cut violation. The legal case rests on three pillars:

- ePrivacy Directive (Article 5(3)): This law explicitly forbids “storing of information… in the terminal equipment of a subscriber or user” without “prior, freely-given, specific, informed, and unambiguous consent.” A browser can function without a 4GB AI model, so “strictly necessary” doesn’t apply. No consent was sought.

- GDPR (Article 5, Lawfulness, Fairness, Transparency): The processing of user data, including the hardware profiling to determine eligibility, lacks a lawful basis and is fundamentally opaque.

- GDPR (Article 25, Data Protection by Design): This principle mandates minimization by default. Pre-staging a 4GB model “just in case” a user might one day use a text-suggestion feature is the antithesis of this rule.

The chilling precedent is that Google, a company with a market-dominant browser, treats its users’ devices as mere deployment targets for its own product roadmap, using a “deploy first, explain maybe later” playbook also seen with Anthropic’s desktop software upgrades.

The User’s Path Forward (Spoiler: It’s Paved with Spite)

Chrome doesn’t make it easy, but you can reclaim control. Deleting the weights.bin file is pointless, Chrome will just redownload it.

For Windows users, navigate to C:\Users\<YourUsername>\AppData\Local\Google\Chrome\User Data\OptGuideOnDeviceModel\.

For macOS users, find it in ~/Library/Application Support/Google/Chrome/OptGuideOnDeviceModel/.

The only effective stopgap is to disable the feature:

1. Open Chrome and type chrome://flags into the address bar.

2. Search for Enables optimization guide on device.

3. Set the dropdown to Disabled.

A more permanent enterprise-level solution involves setting the group policy Do not download model policy for the local foundational GenAI model. For the average user, disabling the flag or navigating to Settings > System and toggling “On-device AI” to Off will work, though you lose all locally-powered AI features. Ironically, opting out requires the kind of deep, non-intuitive knowledge the silent download consciously circumvented.

A Symptom of a Bigger Shift

This incident isn’t isolated. It reflects a broader industry push to embed AI into everything, often by force. It highlights a fundamental conflict: the promise of client-side AI execution reducing cloud dependency (as seen in projects like Qwen 3.5 on WebGPU) is being twisted. The goal should be user empowerment, local models give you speed, privacy, and offline capability. Google’s execution treats the local model as a mandatory, non-consensual asset that conveniently cements their AI ecosystem into your operating environment.

The EU regulators who leveled a $5-billion fine against Google for deceptive data collection practices now have a new, tangible target. The question is no longer if Google can deploy AI, but whether it can do so without treating every device it touches as its own personal cloud node.

If the last decade was about apps tracking your clicks, the next one is shaping up to be about apps installing their own brains onto your hardware. The line between software distribution and digital colonization is getting blurry, and Google just crossed it. Users who want privacy-focused local AI model alternatives will have to look beyond the default choices. The browser wars have entered a new, more invasive phase. The fight for your consent, and your disk space, has begun.