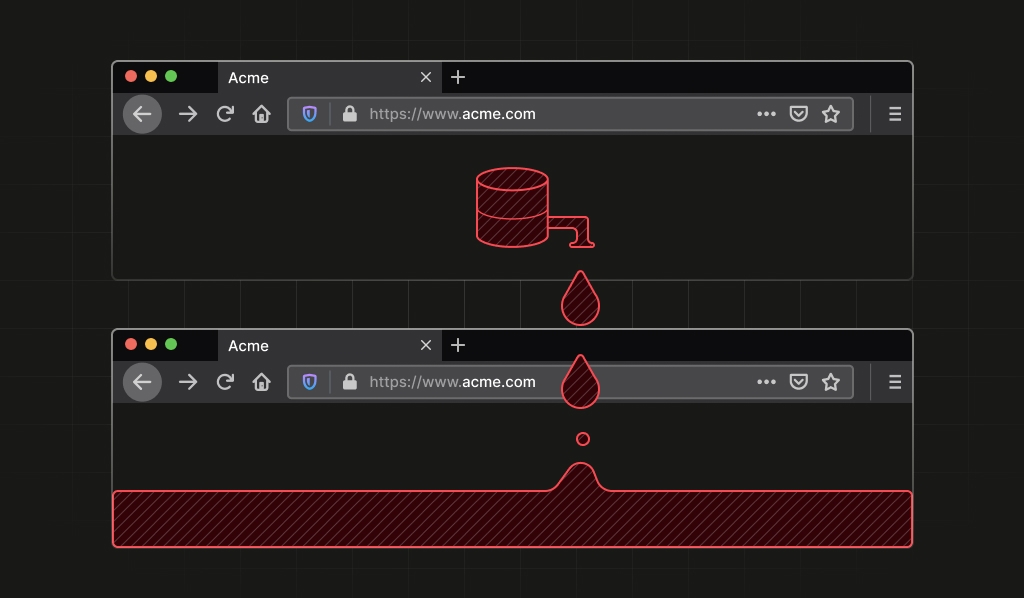

You close your Firefox Private Window or click “New Identity” in Tor Browser, believing your slate is wiped clean. The cookies are gone, the history erased. You are, in theory, a new user. This is the cornerstone of modern digital privacy, the promise of isolation between sessions. A recent discovery by security researchers at Fingerprint shatters that illusion for Firefox-based browsers, exposing a critical architectural flaw that allows websites to link your supposedly isolated identities together with a staggering degree of certainty.

This isn’t about cookies. This is about exploiting the browser’s own internal machinery to generate a stable, cross-origin, process-lifetime identifier. For Tor users, a tool designed for “unlinkability”, this is a critical failure.

Let’s break down how this works, why it’s so significant, and what it reveals about the fragile state of privacy-first browser design.

The Core Vulnerability: indexedDB.databases() as a Process Fingerprint

The bug resides in Firefox’s implementation of the indexedDB.databases() API in Private Browsing mode. IndexedDB is a standard web API for client-side storage, and databases() is supposed to return metadata about databases created by the current website’s origin. Simple enough.

The vulnerability stems from how Firefox handles this in Private Browsing. To enhance privacy, Firefox doesn’t store database names directly on disk. Instead, it maps them to UUIDs in a process-scoped hash table. When indexedDB.databases() is called, it iterates over this hash set to collect the database names. The order in which this iteration occurs isn’t randomized or sorted—it’s determined by the deterministic internal layout of the hash table.

This is the fatal leak. Two different websites (or even two visits to the same site in supposedly isolated sessions) can each create a set of databases and call indexedDB.databases(). They will observe the exact same order of returned database names for the lifetime of the Firefox process. This order acts as a unique, stable identifier.

The Technical Details

The mapping is performed in GetDatabaseFilenameBase(), and the hash set iteration happens in GetDatabasesOp::DoDatabaseWork(). Crucially, this hash table, StorageDatabaseNameHashtable, is a global, process-scoped object. It’s not tied to an origin or a private window. It persists until the browser is fully restarted.

Proof-of-Concept Script

// Create a predictable set of databases

const dbNames = ['a','b','c','d','e','f','g','h','i','j','k','l','m','n','o','p'];

dbNames.forEach(name => {

const request = indexedDB.open(name);

request.onupgradeneeded = (event) => {};

});

// Retrieve and log the order

indexedDB.databases().then(dbs => {

const order = dbs.map(db => db.name);

console.log('Observed order:', order);

});In a vulnerable Firefox Private Browsing session, both origins will log the same permutation, like g,c,p,a,l,f,n,d,j,b,o,h,e,m,i,k. That permutation is your browser’s fingerprint for that session. It doesn’t change until you quit Firefox entirely.

Why This Torpedoes Tor Browser’s “New Identity” Feature

For Firefox, this is a privacy bug. For Tor Browser, it’s a catastrophic failure of a core security feature. Tor Browser’s “New Identity” function is explicitly designed to “[prevent] your subsequent browser activity from being linkable to what you were doing before.” It flushes cookies, clears history, and establishes fresh Tor circuits.

But this vulnerability persists through a “New Identity” reset. As long as the underlying Tor Browser (Firefox) process remains running—which it often does between uses—the stable identifier derived from the hash table iteration order does not change.

A website visited before the “New Identity” command and after it can now definitively link those two sessions. This fundamentally breaks the threat model Tor Browser is built upon. It provides a deterministic, high-entropy identifier that websites can use for cross-session tracking without any cookies or local storage.

The researchers calculated that with just 16 controlled database names, the theoretical entropy space is about 44 bits (log2(16!)), which is more than enough to uniquely identify a vast number of concurrent browser instances.

The Broader Context: Browser Fingerprinting as an Architectural Arms Race

This IndexedDB flaw is not an isolated incident. It’s a symptom of a deeper architectural challenge. Browser privacy modes and anti-fingerprinting measures often focus on clearing state (cookies, localStorage) and fuzzing high-level APIs like navigator.userAgent. However, they frequently overlook low-level, deterministic behaviors of internal data structures.

As privacy consultant Alexander Hanff pointed out in a scathing critique, Google Chrome “ships almost no built-in anti-fingerprinting defenses”, leaving at least 30 distinct fingerprinting techniques viable. Firefox, despite having the privacy.resistFingerprinting flag, and Brave, with its “farbling” techniques, are engaged in a constant battle. The goal is to reduce the uniqueness, the entropy, exposed by the browser.

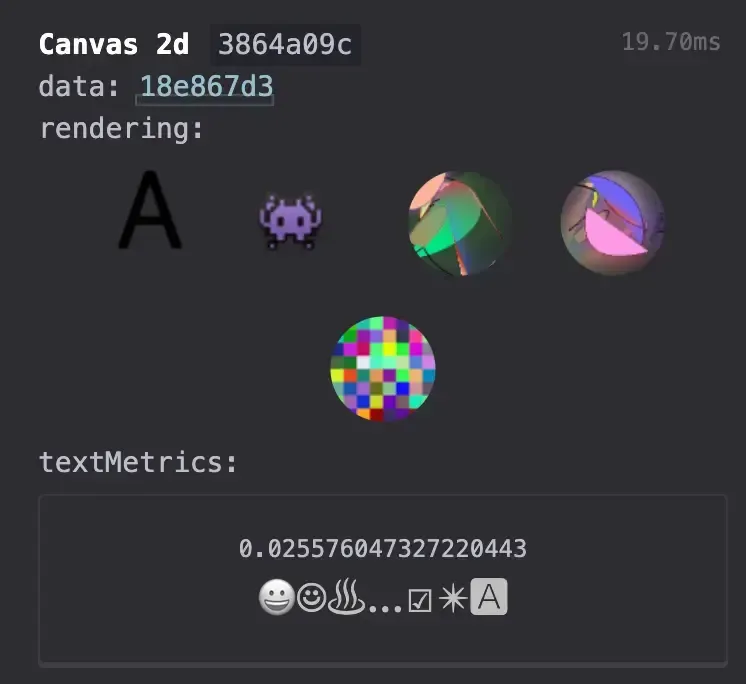

Tools like CreepJS exist precisely to probe these defenses, testing everything from Canvas and WebGL rendering to audio contexts and font enumeration. They look for inconsistencies (“lies”) that reveal a browser is trying to hide its true fingerprint or that expose underlying, stable identifiers, like the Firefox IndexedDB bug.

The Mitigation: Simplicity Itself

The fix for this specific Firefox/Tor flaw, as implemented in Firefox 150 and ESR 140.10.0 (CVE-2026-6770), was elegantly simple: canonicalize the output.

Instead of returning database names in the order dictated by the internal hash set layout, Firefox now returns them in a sorted (e.g., lexicographic) order. This removes the entropy without breaking the API’s utility for developers.

This highlights a critical security principle: privacy bugs aren’t always about leaking sensitive data directly. Sometimes, they’re about exposing implementation details that can be transformed into an identifier. Any API that returns unordered, process-scoped, deterministic data is a potential fingerprinting vector.

The Inherent Limits of Tor’s Anonymity Model

This browser-level flaw intersects with a well-known limitation of the Tor network itself. The Tor Project openly states that its low-latency onion routing “cannot fully prevent end-to-end traffic-correlation when an adversary observes both ends.” If a powerful adversary can watch traffic entering the Tor network (at the guard node) and leaving it (at the exit node), they can statistically correlate flows, especially with enough data.

The Tor Project’s recommended defenses focus on mitigation: protocol-level padding to obscure traffic patterns, circuit isolation, cautious application use, and growing the relay pool to dilute adversarial control. The recent Firefox IndexedDB vulnerability, however, adds a new, client-side correlation vector that operates independently of the network-layer protections Tor provides.

Even if your traffic is perfectly anonymized over the wire, your browser can still give you away.

Practical Ramifications and the Path Forward

For users, the immediate takeaway is to update Firefox-based browsers immediately. For those relying on Tor Browser for critical anonymity, understanding this class of vulnerability is essential. The promise of “New Identity” was always more fragile than advertised, and this bug proves that client-side software complexity is a persistent enemy of privacy.

For architects and developers, this incident is a masterclass in threat modeling. It underscores that:

- Process boundaries are not privacy boundaries. Any global, process-scoped state is a potential cross-context identifier.

- APIs must be designed with fingerprinting resistance in mind. Non-deterministic or canonicalized outputs should be the default for metadata APIs.

- Privacy features must be tested holistically. Isolating cookies is useless if a low-level storage API leaks a stable ID.

- The cat-and-mouse game continues. As tools like CreepJS demonstrate, the fingerprinting surface area is vast (Canvas, WebGL, Audio, Screen properties, TLS handshakes, etc.). Defenders must systematically audit and “fuzz” every information-leaking interface.

The indexedDB.databases() flaw is more than just a bug, it’s a lens. It reveals how the complex, layered architecture of modern browsers, with its caches, hash tables, and performance optimizations, can create unintentional side channels that violate the very isolation guarantees we take for granted.

As we push for more private browsing experiences, we must demand that these architectures are built not just for speed and compatibility, but for genuine, verifiable unlinkability. Otherwise, our “private” windows are just lightly tinted glass.