The walled gardens of cloud AI just got a major crack. Claude Code, Anthropic’s sophisticated coding agent, can now run locally through llama.cpp’s newly implemented Anthropic Messages API support – and early adopters are discovering both the incredible potential and harsh realities of cutting the cloud cord.

The Integration That Breaks Vendor Lock-In

The llama.cpp pull request #17570 represents more than just another feature addition. It’s a fundamental shift in how developers can leverage advanced coding assistance without monthly subscription fees or privacy concerns. The integration enables Claude Code to connect directly to local llama.cpp servers, converting Anthropic’s format to OpenAI-compatible internal format and reusing the existing inference pipeline.

This isn’t just about convenience – it’s about sovereignty. As one developer noted, “This allows llama.cpp to serve as a local/self-hosted alternative to Anthropic’s Claude API”, fundamentally changing the economics of AI-assisted development. The feature implements key endpoints including POST /v1/messages for chat completions with streaming support and POST /v1/messages.count_tokens for prompt token counting, creating a complete local inference ecosystem.

The Hardware Reality Check: Not for the Faint of Heart

Early testing reveals the brutal truth about running advanced coding agents locally. One developer running Claude Code with gpt-oss 120b reported achieving “700 pp and 60 t/s” – respectable performance, but requiring substantial hardware investment. The developer bluntly warned: “I don’t recommend trying slower LLMs because the prompt processing time is going to kill the experience.”

Why such demanding requirements? Claude Code’s system architecture carries significant overhead. The system prompt alone consumes about 15,000 tokens, as confirmed by community testing. This context pressure limits viable model choices significantly. As contributors noted, “I haven’t seen a LLM in the range of 30B parameters that can deal with this”, suggesting that smaller models simply can’t handle the computational demands.

The Model Selection Conundrum

The integration works technically with “pretty much any model”, according to one contributor, but practical usability requires careful model selection. Developers report that models like MiniMax M2, Kimi K2, and Qwen3 Coder 480B-A35B show promise for approaching Claude Sonnet-level performance, while simpler coding tasks can run on more accessible hardware like a single RTX 4090 with Qwen3 Coder 30B-A3B or gpt-oss-20b.

However, there’s a performance-quality tradeoff that becomes immediately apparent. As one tester observed, “gpt-oss-120B makes a lot of mistakes and it needs to iterate more.” The iteration requirement means that even with decent token-per-second rates, the overall coding experience might not match cloud offerings for complex tasks.

Why This Matters Beyond Claude Code

This integration represents a broader trend toward API standardization that benefits the entire open-source AI ecosystem. The fact that “other clients expecting Anthropic Messages API instead of OpenAI” can also leverage this implementation means we’re seeing consolidation around major API formats.

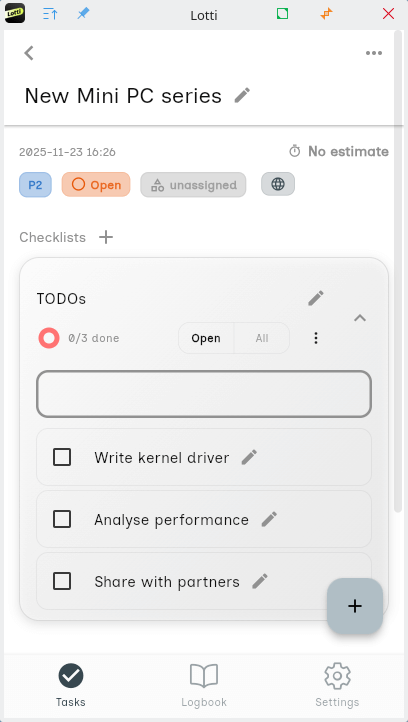

The move also highlights the growing maturity of local inference stacks. Projects like Lotti, which emphasizes “complete data ownership” and “configurable AI providers per category”, show that the market is demanding flexible, privacy-first AI tools that can mix local and cloud inference as needed.

The Performance vs. Privacy Calculus

Running Claude Code locally isn’t just about escaping monthly bills – it’s about data sovereignty. When your code never leaves your infrastructure, you eliminate the privacy concerns that come with cloud-based AI assistance. However, this comes with significant computational costs.

Early adopters discovered that some larger models like Minimax M2 IQ4_XS were “far too slow” for practical use, with one test taking “30 minutes to answer the first question about a project.” This performance gap is the tradeoff developers must weigh against the benefits of complete data control and zero ongoing costs.

The Future of Local Coding Agents

The llama.cpp integration opens up possibilities beyond just running Claude Code locally. It establishes a pattern that other AI coding tools could follow, creating a more competitive and open ecosystem. As developers experiment with different model combinations and hardware configurations, we’re likely to see optimized setups that balance performance, cost, and privacy according to specific use cases.

The community response suggests this is more than a niche feature. Developers are hungry for alternatives that don’t lock them into vendor ecosystems, and this implementation provides a path forward. However, as one contributor noted, “Local models would benefit from a more efficient CLI tool, one that makes better use of the available context”, suggesting there’s still optimization work to be done.

Getting Started with Caution

For developers ready to experiment, the path involves compiling the latest llama.cpp with the Anthropic API support, setting up a compatible local model (70B+ parameters recommended), and configuring Claude Code to point to your local server. The performance sweet spot seems to be in the 120B parameter range for serious coding work, though simpler tasks might run adequately on smaller models.

The integration represents a significant milestone in the democratization of AI development tools. While cloud-based AI coding assistants have dominated the landscape, this breakthrough gives developers an escape route from vendor lock-in, monthly subscriptions, and privacy concerns. The performance requirements mean it’s not for everyone – but for organizations with the right hardware and data sensitivity concerns, it’s a game-changing alternative.

As the local AI ecosystem matures and models become more efficient, we can expect this pattern to become increasingly viable for mainstream development workflows. The era of cloud-only AI coding assistance is officially over – what remains to be seen is how quickly local alternatives can close the performance gap.