Your LLM Doesn’t Think in English (Or Chinese, or Python)

New research reveals that large language models process meaning through universal vector geometries, not linguistic structures, challenging everything we assumed about how AI ‘thinks’.

New empirical evidence suggests this is exactly backwards. In the middle layers of transformer models, where the actual computation happens, language identity effectively vanishes. A sentence about photosynthesis in Hindi is geometrically closer to photosynthesis in Japanese than it is to cooking in Hindi. The model isn’t translating between languages, it’s dissolving them entirely into a high-dimensional semantic manifold where meaning exists as pure geometry.

This isn’t just a curiosity about internal semantic space and latent representations. It challenges the Sapir-Whorf hypothesis, reframes Chomsky’s Universal Grammar, and explains why certain architectural hacks, like duplicating middle layers, can boost performance without touching a single weight.

The Experiment: 64 Sentences, 8 Languages, 5 Models

David Noel Ng’s recent investigation into what he calls “LLM Neuroanatomy” takes a sledgehammer to our assumptions about multilingual processing. The methodology is disarmingly simple: take eight semantically distinct sentences (covering science, poetry, history, cooking, law, medicine, sports, and economics) and translate them into eight languages spanning radically different families and scripts: English, French, Chinese, Japanese, Korean, Arabic, Russian, and Hindi.

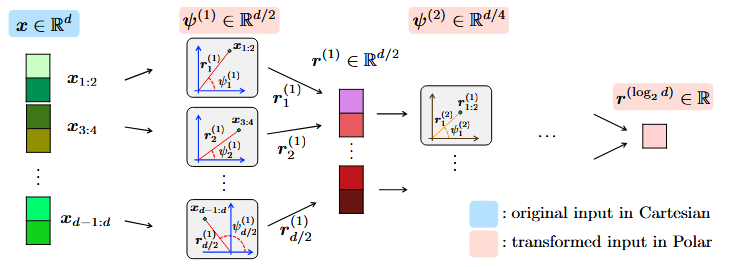

That gives 64 total sentences. Feed them through the model, extract hidden-state representations at every layer, and compute pairwise cosine similarities across all 2,016 possible combinations. If the model were truly “thinking” in language, maintaining distinct cognitive pathways for Chinese versus French circuitry, we’d expect same-language pairs to cluster together throughout the stack. Instead, something stranger happens.

The model knows exactly what language it’s reading. English looks like English, Arabic looks like Arabic. The green line peaks here as the model normalizes radical surface forms.

Language identity collapses. The red line crosses above. Photosynthesis in Hindi is more similar to photosynthesis in Japanese than cooking in Hindi.

Language specificity returns as the model prepares to emit tokens, committing to a specific vocabulary and script.

The data reveals a consistent three-phase anatomy across all five tested models (Qwen3.5-27B, MiniMax M2.5, GLM-4.7, GPT-OSS-120B, and Gemma-4 31B). The transition is abrupt and visually striking in PCA plots. Early layers cluster by language (eight tight groups). Middle layers reorganize by topic (eight groups of science, poetry, etc., regardless of language). Late layers return to language clusters. The model dissolves “language” in the middle and reconstructs it at the end.

“But This Is Just Bottleneck Compression”

Critics argued this is merely a basic property of information bottlenecks, encoder-decoder architectures have been designed for years to compress inputs into a “conceptual meta-language” before decoding. Transformers, even decoder-only variants, comprise an implicit bottleneck against their input/output space. All bottlenecks require compression to shared latent spaces, what’s new here?

This objection misses the interesting part. Yes, compression necessitates shared representations. But the geometry of that shared space matters immensely. The fact that five architecturally distinct models, from dense transformers to 256-expert MoEs, trained by different organizations on different data mixes, all converge on the same three-phase structure suggests we’re not looking at a training artifact. We’re looking at a convergent solution that emerges from the optimization pressure of next-token prediction on multilingual data.

Moreover, the “bottleneck” explanation doesn’t account for the code and math experiments. Ng tested whether the universal space extends beyond natural language by comparing English descriptions, Python functions (using only single-letter variables to prevent semantic cheating), and LaTeX equations for the same concepts.

Take kinetic energy: 0.5 * m * v ** 2, $E_k = \frac{1}{2} m v^2$, and “half the mass times velocity squared” share essentially zero surface-level features. Yet in the middle layers of MiniMax M2.5, these representations converge toward the same region of the activation space.

The model isn’t just compressing language, it’s building a modality-agnostic semantic manifold where Python functions and mathematical notation are mapped onto the same geometric coordinates as their natural language descriptions.

Chomsky Was Half Right (About the Wrong Thing)

There’s a delicious irony here for linguistics nerds. Noam Chomsky’s Universal Grammar hypothesis posited that all human languages share an innate deep structure beneath their surface diversity. Chomsky assumed this structure was syntactic, rules governing how words combine and recurse. He famously used “Colorless green ideas sleep furiously” to prove that syntax operates independently of semantics.

The transformers show the opposite priority. The universal structure in the middle layers isn’t organized by syntax, it’s organized by semantics. The PCA plots don’t cluster SVO languages together or SOV languages together. They cluster by meaning. Chomsky was right that there’s a universal deep structure underlying all languages, but it appears to be a continuous geometric manifold of semantic relationships, not a discrete rulebook of syntactic primitives.

This vindicates the intuition behind Universal Grammar while contradicting its mechanism entirely. The universal structure isn’t innate or hardwired, it’s emergent. Gradient descent, faced with enough linguistic diversity, discovers that the most efficient way to be smart in 100 languages is to strip language away entirely in the middle and reason in pure geometry.

The Anti-Whorfian Bottleneck

The Sapir-Whorf hypothesis, roughly, that language shapes or determines thought, has been debated for nearly a century. In its strong form (linguistic determinism), it claims language limits what you can think. In its weak form (linguistic relativity), it merely suggests language influences cognition.

LLMs provide the first controlled system where we can actually test this empirically by reading internal representations. The data presents what we might call an anti-Whorfian bottleneck: a structural separation of language from reasoning. Whatever language-specific biases enter through the decoding layers, the mid-stack strips them out on the way to a shared semantic representation.

Why It Matters

This demonstrates that sophisticated language processing doesn’t require language-specific reasoning pathways. The model creates an interlingua—a high-dimensional geometric structure where semantic relationships are encoded as distances and directions, independent of surface form.

Why This Actually Matters for Engineering

This isn’t just philosophy. The three-phase anatomy predicts where you can intervene in a model and where you’ll break things. Ng’s earlier RYS (Repeat Your Self) research showed that duplicating middle layers, running the reasoning block twice, can boost benchmark performance without changing any weights. But this only works in the middle layers. Duplicate early or late layers, and the model collapses.

The geometry explains why: middle layers operate in format-agnostic space. Their input and output distributions are similar enough that you can loop back without catastrophic distribution mismatch. Early and late layers are doing format-specific work (decoding/encoding), feeding their outputs back into themselves causes type errors in the tensor space.

This has implications for fundamental transformer mechanics and LLM architecture. If we want to build more efficient models, we should focus compute on the reasoning layers—the geometric middle—while compressing the bookend layers that merely handle I/O format conversion. It also suggests that models with deeper stacks (more layers in the reasoning zone) have more room to “stay in the zone” during complex inference, explaining why RYS benefits scale with model size.

The Geometry of Thought

What we’re seeing is a shift in how we conceptualize LLM intelligence. These systems don’t possess a “Chinese module” and an “English module” that activate depending on input. Instead, they translate all inputs, whether Mandarin, Python, or LaTeX, into a private internal language of vectors and hyperplanes.

In this space, photosynthesis is a specific region, kinetic energy is another, and the relationships between them are encoded as geometric distances. The model isn’t manipulating symbols, it’s navigating a landscape of meaning.

The critics are right that this emerges from the math of high-dimensional embeddings. But they’re wrong that this makes it trivial. The convergence across architectures, the clear phase boundaries, and the modality-agnostic nature of the middle layers suggest we’ve found something fundamental about how neural networks represent meaning when scaled to billions of parameters.