The demo is flawless. A Slackbot answers complex data questions in natural language, generates charts on demand, and even suggests follow-up analyses. The room erupts in applause. Six months later, that same bot is either abandoned, frozen in a sandbox, or actively generating metrics that make your CFO question their life choices. This isn’t a hypothetical, it’s the lived experience of organizations caught in the chasm between agentic AI’s promise and its messy, expensive reality.

“How did we end up in a situation where everything is possible yet nothing is actually changing? I read about companies replacing entire teams with AI agents, but at the same time there is no real usecase in it. Everybody is talking about how awesome agentic AI is, yet I have customers who aren’t able to open a PDF.”

That tension, between cutting-edge autonomy and basic digital literacy, defines the current moment. We’re building agents that can reason across multi-step workflows while our end users struggle with file associations. The problem isn’t the technology. It’s that we’re solving problems that were already solved, badly, while ignoring the organizational readiness crisis that actually determines success.

The Hype Machine vs. The Academic Reality Check

Silicon Valley’s narrative is seductive: agentic AI will autonomously resolve 80% of customer service issues by 2029, transform healthcare delivery, and make government services actually functional. Deloitte’s research shows 61% of healthcare leaders are already building agentic AI initiatives, with 98% expecting at least 10% cost savings. The use cases sound transformative:

- Healthcare: Post-discharge monitoring that improves care continuity, prior authorization optimization with closed-loop reporting

- Government: Automated permit prepopulation, intelligent service request routing, benefits application processing

- Enterprise: End-to-end claims adjudication, fraud detection, workforce capability transformation

But here’s where the story fractures. The same academic institutions building these systems are waving red flags. When researchers from MIT report that 95% of generative AI pilot projects are failing, and Stanford studies show agents hallucinate when domain knowledge is incomplete, you have to ask: are we measuring progress by press releases or by production outcomes?

The gap between research and reality shows up in how we talk about capabilities. A VP of Engineering might claim Claude is writing apps in three languages, doubling productivity. Meanwhile, a finance professional discovers their “revolutionary” PDF-to-Excel conversion is just Power Query with extra hallucination risk and a $20/month Copilot subscription.

The $50M Distraction: When Agents Reinvent the Wheel

The finance PDF story is the perfect microcosm of the hype-reality gap. A user proudly describes uploading 60 pages of transactions to Copilot, converting them to Excel in 30 seconds instead of having an associate do it manually. The response from actual finance professionals was brutal: “We’ve been doing this via Power Query for a half decade. The only thing you’ve added is a step that can fuck up the data translation.”

This is agentic AI’s dirty secret: it’s often solving problems that were already automated, but doing them probabilistically instead of deterministically. The original poster didn’t need an AI agent. They needed to know their organization already had deterministic tools that work with 100% accuracy. Instead, they got:

- Token costs for processing 60 pages

- Hallucination risk on financial data

- A validation burden that may exceed the original manual effort

- The false confidence that comes with “AI magic”

As one commenter noted: “Something output the PDF… start there.” The real problem wasn’t data entry, it was receiving data in a suboptimal format from a legacy system. An agent that converts PDFs is a bandage on a broken process. An agent that fixes the root cause by integrating directly with source systems? That’s value. But that’s not what we’re shipping.

Where Agents Actually Work: The Context Engineering Revolution

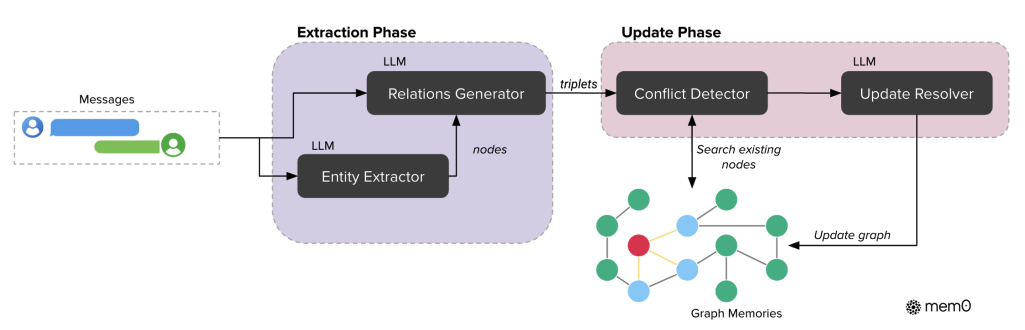

The agents that succeed in production share a common architectural pattern: they don’t rely on raw LLM power. They rely on structured context engineering. The Neo4j case studies reveal the blueprint:

1. Metadata-First Design (Quollio Technologies)

Instead of exposing sensitive data, agents operate over a knowledge graph of enterprise metadata. Business users ask governance questions, agents navigate relationships across systems without touching raw records. This reduces compliance risk while improving traceability.

2. GraphRAG for Dynamic Retrieval (Simply AI)

Voice agents fail when they rely on static prompts. Simply AI’s solution retrieves only relevant context from a knowledge graph during each conversation turn, preserving structure for multi-tenant environments. Response consistency improves because agents aren’t improvising, they’re traversing verified relationships.

3. Explicit State Management (Floorboard AI)

Training pilots for air traffic control requires agents that reason over airport layouts modeled as graphs, not free text. The agent integrates real-time weather data, computes exact taxi routes, and responds using standard phrasing. It doesn’t guess, it executes within explicit constraints.

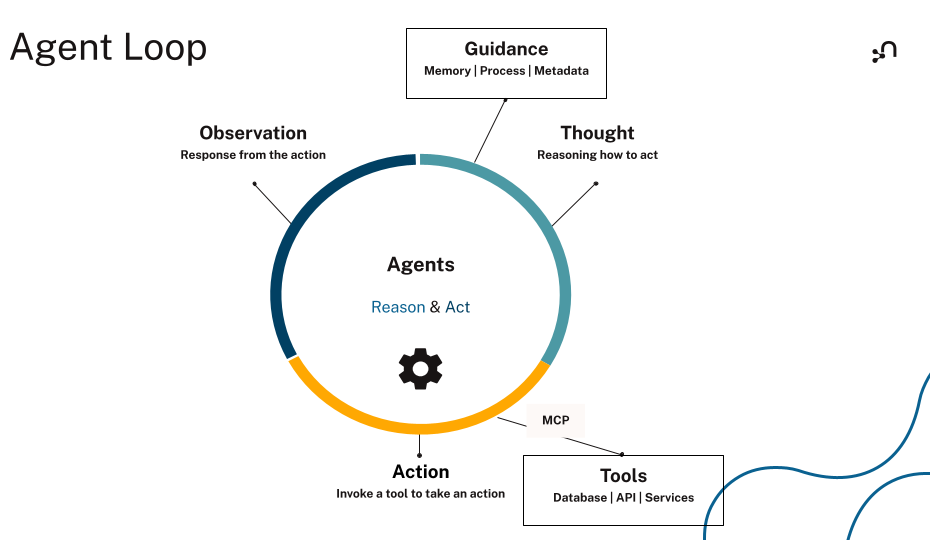

The ReAct loop (Reason → Act → Observe → Iterate) looks elegant on paper. In practice, it breaks down when:

- Context windows overflow: Agents lose track of state in long workflows

- Tool misuse: Agents call the wrong API or pass incorrect parameters

- Hallucination under uncertainty: When data is missing, agents invent plausible-sounding garbage

The solution isn’t bigger models, it’s better context architecture. As the Neo4j research shows, production-ready agents separate short-term reasoning context from long-term memory, use knowledge graphs for multi-hop reasoning, and enforce governance through tenant isolation and human approval gates.

The Organizational Readiness Crisis

Here’s the uncomfortable truth: your agentic AI initiative is probably failing because your organization is at Level 1-2 maturity trying to deploy Level 4-5 capabilities.

The SecurityBrief AI maturity model shows most organizations are stuck:

- Level 1 (Reactive): Shadow AI usage, employees using ChatGPT without authorization

- Level 2 (Organized): Basic chatbots, agent assist features, translations

- Level 3 (Strategic): Systematic integration with sentiment analysis and intent detection

- Level 4 (Intelligent): AI agents leveraging enterprise systems for autonomous task completion

- Level 5 (Autonomous): Skynet-level systems handling complex strategic decisions

More than half of organizations are still in pilot phases. They’ve bought the tools but can’t deploy them effectively. The gap isn’t technical, it’s organizational.

The Silent Killer: Lack of Readiness

Employees worry AI will replace them (61% believe it could happen within 3-5 years). This fear doesn’t disappear because you deploy a shiny new agent. It metastasizes. When you don’t communicate purpose, train effectively, or acknowledge legitimate concerns, adoption stalls. Worse, people create workarounds that increase security risk, hello, shadow AI.

Deskpro’s experience is telling: their engineering team loves AI coding assistants. Their support team benefits from AI-integrated workflows. But a four-month project to replace human roles? Terminated and written off. The technology wasn’t the problem. The organizational change management was.

The Security Veto

Security is no longer a checkbox, it’s a veto power. Seventy-eight percent of organizations involve IT/Security in final AI purchasing decisions. The biggest risk when moving from Level 3 to Level 4 is exposing sensitive data to LLMs vulnerable to prompt injection attacks.

For regulated industries, the architecture matters more than the model. Most AI SaaS solutions process data over public cloud infrastructure. For healthcare, finance, or government, that’s a non-starter. The solution is Virtual Private Cloud deployments or sovereign clouds where data stays within controlled networks. But that requires infrastructure most organizations haven’t built.

The Business Model Apocalypse: “Death of the Seat”

Workday’s recent earnings drama exposes the economic tension. The company is pivoting from “per seat” pricing to “Flex Credits”, charging for AI actions rather than human logins. Why? Because if AI agents can process invoices, you don’t need as many AP clerks. If you don’t need as many clerks, you don’t buy as many seats.

This is the “AI cannibalization” problem: AI success threatens the core SaaS revenue model. Workday’s stock trades at a forward P/E of 14.2x, down from historical premiums of 60x+, because investors are pricing in a scenario where AI automation kills the seat-based golden goose.

The market’s pessimism is extreme. Analysts have slashed price targets from $240 to $150. The consensus view is that agentic AI will commoditize software categories, turning Workday’s modules into features rather than destinations.

But here’s the asymmetry: if Workday can prove its unified data platform becomes more valuable in the AI era, if it can show that AI products added 1.5 points to ARR growth and that three-quarters of new deals include AI features, then the current valuation looks like a generational buying opportunity. The market is pricing in failure. The reality might be more nuanced.

The Efficiency Mirage: When Tokens Burn Faster Than Value

Let’s talk about the cost elephant in the room. One analysis of OpenClaw’s automation promises revealed it can be a “$200/month token-burning machine.” Agents that iterate endlessly, call APIs redundantly, and reprocess entire conversation histories are compute nightmares.

The efficiency gains are often illusory:

- Time savings: Yes, the agent did the task in 30 seconds. But you spent 20 minutes validating it didn’t hallucinate.

- Cost savings: Sure, you didn’t pay an associate. But you paid $50 in API calls to process a document deterministic tools handle for free.

- Productivity gains: The agent generated code, but you spent hours debugging edge cases it didn’t understand.

This is the “babysitting tax” of current agents. They’re not autonomous coworkers, they’re interns who need constant supervision, except these interns cost millions in infrastructure and can accidentally delete your email archive.

The Path Forward: From Hype to Hospital-Grade Reliability

If we want agentic AI to solve real problems, we need to stop optimizing for demos and start optimizing for production. The healthcare case studies show the way:

1. Start with Governance, Not Autonomy

Mayo Clinic’s agentic AI deployment for provider workflows didn’t begin with “let’s automate everything.” It began with explicit guardrails for decision-making, privacy, data quality, and transparency. Every action is traceable. Human escalation paths are clear. This is “hospital-grade AI”, where mistakes have consequences.

2. Model the Domain, Not the Task

Floorboard AI’s air traffic control agent succeeds because it models airports as graphs, not because it has a better LLM. When you encode real-world constraints into the context layer, agents don’t hallucinate taxi routes, they compute them.

3. Measure What Matters

Deloitte’s research shows early adopters expect 20%+ cost savings, while “watchers” expect less than 10%. The difference? Early adopters are prioritizing multi-agent systems that redesign end-to-end workflows. Watchers are buying point solutions that automate discrete tasks. The ROI gap isn’t magical, it’s architectural.

4. Acknowledge the Readiness Gap

Before you deploy Level 4 agents, you need Level 2-3 maturity: basic AI tools, governance frameworks, trained staff, and clear metrics. Skipping stages doesn’t accelerate progress, it guarantees failure. The common pitfalls in deploying agentic AI systems to production are rarely technical. They’re organizational.

The Verdict: Are We Solving Real Problems?

Agentic AI is neither scam nor savior. It’s a powerful tool that’s being deployed incompetently by an industry addicted to demos and allergic to operational reality.

The problems we should solve:

– Healthcare’s administrative burden (40% of prior authorizations automated at MUSC Health)

– Government service delivery (Los Angeles training 27,000 employees on responsible AI use)

– Enterprise data silos (Quollio’s metadata agents providing governance without exposure)

The problems we’re actually solving:

– Converting PDFs to Excel (already solved, now with hallucinations!)

– Writing code that works in demos but fails in legacy codebases

– Burning tokens to automate tasks that were already automated

The gap isn’t technological. It’s that we’re building for the Twitter demo thread when we should be building for the IT helpdesk ticket that takes three months to resolve. We’re optimizing for investor pitch decks when we should be optimizing for the frontline worker who just wants to add someone to a listserv.

The real inflection point won’t come from a bigger model. It will come when we admit that degrading reliability of larger LLMs despite scale increases is a feature, not a bug. When we stop chasing autonomous agents and start building governed systems that augment humans instead of replacing them. When we price AI by outcomes, not actions.

Until then, we’ll remain stuck in this absurd paradox: everything is possible, yet nothing is actually changing. And your PDF-to-Excel agent will continue to be the $50M distraction that proves it.

Your AI maturity assessment: Before your next agentic AI project, honestly evaluate where you sit on the readiness spectrum. If you’re at Level 2, don’t pitch Level 5. If your data is fragmented, fix that before you automate. And if you’re solving a problem that already has a deterministic solution, stop. Just stop.

The future of agentic AI isn’t in the model. It’s in the context, the governance, and the humility to admit that sometimes the best agent is the one that doesn’t get built.